This is a special guest blog post by Ted Dennis, an enterprise architecture consultant and Microsoft vTSP with over 15 years’ experience in designing, developing, and implementing state-of-the-art information technology systems for business, including enterprise architecture, application architecture, infrastructure, and network design.

Those of us who have been involved in enterprise architecture over the years have seen patterns come in and out of style, depending on the current technology and strategy. One constant for the last several years has been the Enterprise Service Bus (ESB). The ESB has been the great enabler for complex event processing and orchestration across multiple systems ranging from legacy mainframes to mobile application interactions in the field.

ESB products all have their strengths and weaknesses. Some are fantastic at real-time throughput while others excel at robust, guaranteed event processing and recoverability. Neuron ESB, which is my personal tool of choice, does both while providing virtually unlimited connectivity and orchestration options (shameless plug). But, with great power comes great responsibility!

A favorite expression of mine in enterprise and application architecture is, “just because you can do something doesn’t mean that you should.” In other words, there are typically multiple ways to accomplish a specific integration task. The “coolest” or most current technological approach may not be the best when disparate systems are at play or other big-picture factors exist (e.g. support staff capabilities, etc).

Almost every important decision in architecture is a trade-off, with each enterprise’s unique, technology footprint, capabilities and strategy influencing the process. Some folks have suggested the ESB is no longer a requirement for extensible, enterprise integration and should be re-evaluated as to its usefulness. Within the context of a complex enterprise, I beg to differ. In fact, I would suggest Neuron ESB and similar products are more important to the technology strategy going forward to ensure extensibility and the ability to support the ‘next greatest thing’, whatever that may be.

The current fascination with microservices can fall into this category. Microservices are typically small REST interfaces that do one thing and only one thing with great efficiency. While microservices are great for scaling-out and reducing dependencies across projects and resources, the decentralized approach can create problems for the complex enterprise. Combining the right microservice strategy with the right ESB strategy, however, can be an awesome combination. If we add in one of our old architectural pattern friends, Command Query Responsibility Segregation (CQRS), the result can be magical.

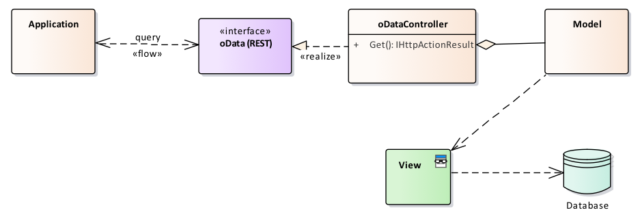

Figure 1: oData Microservice

CQRS, in a nutshell, simply means using different methodologies for reading and updating data. In most enterprise applications, the majority of traffic is read-only in nature. Why not provide the ability to scale read operations independently of much more expensive (resource-wise) write operations? Who wouldn’t like that? The problem that arises, though, is the overall increase in complexity. CQRS can be overkill for low-complexity scenarios. I would suggest to you, however, enterprise integration by its nature is complex, and thus potentially a perfect use case for CQRS.

If we go back to my statement about not necessarily doing things just because we ‘can’, the ESB shouldn’t be used for every situation as well. In almost all of the enterprise integration scenarios that I have had the pleasure of working within, one of the greatest values of an ESB is the ability to coordinate events and data changes across many different systems.

Usually, reading data isn’t an event other systems are interested in, nor are operations such as printing, etc. There is not much value in pushing this traffic through the ESB just because you think some system in the future ‘might’ need to know about it. There are valid exceptions where it does make sense to use the ESB to broker read-only traffic, such as protocol or mediation, if your ESB platform is adequately enabled (a la Neuron ESB). Still, it should be the exception not the rule in larger implementations. Now, where did I hear about the concept of segregating read and write operations? Oh, yeah: CQRS. This is starting to sound very familiar.

So, stitching this all together, one can visualize an architecture in which read-only operations are primarily handled by discreet, independent microservices. Update operations, which typically may need to feed or notify multiple systems, are primarily brokered by the ESB. The result is CQRS at a macro level.

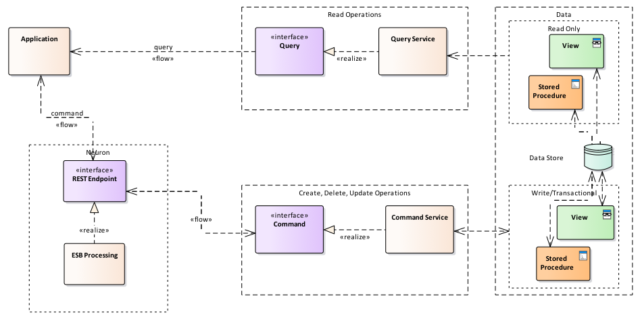

Figure 2: CQRS via Neuron ESB

I have a financial services customer that is implementing just this strategy. Microservices are being deployed on Azure Service Fabric (on-premises) to take advantage of the auto-scaling, management and robustness provided. Command (update) services are invoked by calling an interface on Neuron ESB (more or less like a wiretap/proxy) which then calls the ‘real’ service.

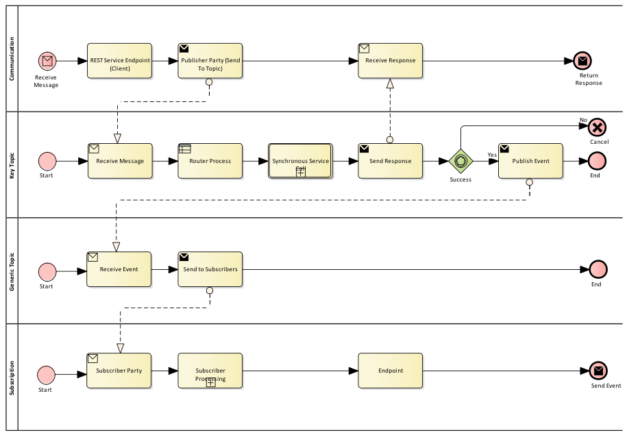

Figure 3: Generic Neuron ESB flow

Result-event messages are published to the bus and can be consumed by any interested subscriber system. In complex event processing scenarios, compensation transactions are managed in Neuron ESB as well. For example, if there is a process that calls three (3) external services and the second one fails, the first transaction can be compensated by invoking an offsetting service method (such as a ‘delete’ after a previous ‘add’, reverse an update, etc.).

By using Neuron ESB as a companion to a microservices strategy with CQRS, complex integration can still be managed and controlled as needed without sacrificing most of the benefits microservices afford. Neuron ESB brings order to integration chaos, allowing systems to communicate using the best method available for each unique set of constraints.

This was a special guest blog post by Ted Dennis, Enterprise Architecture Consultant and Microsoft vTSP.

Read more about Peregrine Connect

-

Rabbit MQ Topics

Introduction Due to the open-source nature of RabbitMQ and constant updates, it is...

-

Port Sharing

One of Neuron ESB’s scalability features is the ability to install multiple...

-

The Integration Journey to...

The Integration Journey to Digital Transformation with Peregrine Connect

-

Saving Time and Money by...

Neuron ESB Application Integration and Web Service Platform: A Real-World Example...

-

Neo PLM

-

Loomis Case Study

Loomis Chooses Peregrine Connect as Their No/Low-Code Integration Platform:...

-

Decision Test Data Mapping

- Use decisions to drive the execution of...

-

Map Testing

Learn how to utilize FlightPath's testing functions...